An agile process is any approach that promotes frequent interaction between an IT department and business users, and tries to regularly build, test and deliver software that meets business users' changing requirements. Agile is all about short, flexible development cycles that respond quickly to customer demand. Doing this effectively often involves building a two-way DevOps software pipeline between you and your customers in order to quickly get high-quality software into the hands of your customers as well as feedback back from those customers.

The goal of most CD projects is to automate as many manual processes in the software development process as possible. Among the roadblocks in a DevOps CD pipeline that lead to slow deployment are error-prone manual processes such as handoffs from a development group to a QA group, including ones that require signatures or bureaucratic approval. These kinds of handoffs mean there is a lack of shared ownership of the end product, which is contrary to the basic agile software testing and development methodology that says all members of a cross-functional agile team are equally responsible for the quality of the product or the success of the project. Because of this, testing on an agile project is done by the whole team, not just designated testers or quality assurance professionals, including team members whose primary expertise may be in programming, business analysis, database or system operation.

Challenge: Deciding What Tests to Automate

If your organization is building or has a DevOps software pipeline in place, then you're well on your way to strategically leveraging best practices in agile testing, DevOps continuous integration and test-driven development to accelerate your test QA processes and reduce cycle time. This includes automating as many tests as possible, including GUI, API, integration, component and unit tests.

The Agile Test Automation Pyramid

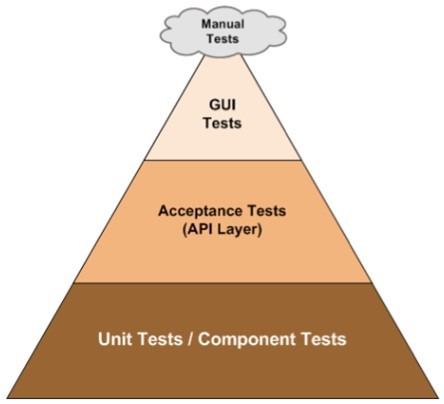

Use the agile test automation pyramid as a strategy guide for automating software tests in a Continuous Delivery pipeline.

Image Source: Codylab

The testing pyramid is a popular strategy guide that QA teams use when in planning their testing efforts. As shown in the illustration above, the base or largest section of the pyramid is made up of Unit Tests--which will be the case if developers in your organization are integrating code into a shared repository several times a day. This involves running unit tests, component tests (unit tests that touch the filesystem or database), and a variety of acceptance and integration tests on every check-in.

If your developers are practicing Test-Driven Development (TDD), they'll have already written unit tests to verify each unit of code does what it's supposed to do. Unit and component tests are the easiest tests to automate because they're usually run as part of an automated build since they're useful in providing developers with immediate feedback regarding potential issues in the latest release.

The middle of the pyramid consists of Acceptance and GUI integration tests, which represent smaller pieces of the total number of automation test types that should be created. Following the Test Automation Pyramid too literally can create challenges in implementing--and budgeting for-- a Continuous Delivery automation project. The order of tests your organization attempts to automate should follow standard business logic, meaning you may need to spend more time and money automating GUI tests if your software users expect a fast, rich and easy user interface experience. If you're developing an app for an Internet of Things (IoT) device that primarily talks to other IoT devices, automation of GUI testing is less of an issue.

At the top or eye of the pyramid are the last tests that should be considered for automation, the manual exploratory tests, which are tests where the tester actively controls the design of the tests as those tests are performed and uses information gained while testing to design new and better tests. Exploratory testing is done in a more freestyle fashion than scripted automated testing, where test cases are designed in advance. Manual testing is important on agile projects because it allows the QA tester to combat a tendency toward groupthink on an agile team by playing the role of critical evaluator, or "devil's advocate," and asking tough, "what if"-type testing questions that challenge the rest of the team's decisions and choices. However, too many manual test steps will likely slow down your delivery pipeline and keep you from being able to scale up your CD implementation. With modern quality management software, however, it's possible to semi-automate these kinds of tests, which entails recording and playing back the test path taken by an exploratory tester during a testing session. This will also help other agile team members recreate the defect and fix the bug.

Challenge: Too Many Automation Tools

As part of a yearly survey titled "How the World Tests," Zephyr recently surveyed over 10,000 customers in more than 100 different countries, and one of the main questions asked was how many projects where being done using Agile methodology. In 2016, a clear majority of projects across the board were run in an Agile/Scrum way, and almost 30% used a “Hybrid” or customized version of Agile. The survey found that one of the biggest barriers to using agile effectively had to do with test automation. Over 50% of respondents stated that their organizations did not have a enough test automation or they did not having enough time to run all the tests needed on fast-paced Agile projects.

An interesting survey fact in 2016 is that over 70% of all customers are using multiple automation tools (an increase from 58% to 75% in just a year). Having multiple automation tools means multiple sets of test scripts, plans, execution runs and results, which can impede test automation efforts and cause serious maintenance issues. Properly designed and constructed test automation frameworks--such as the one described in the table below--can help by making it easier for agile software testing teams to go to one place, instead of multiple places, to fix or extend test cases.

Layers in a Test Automation Framework

|

Layer 1 (Custom Code): This is code specific to the teams needs and may include abstractions for interacting with page or view-level objects, communicating to web services, checking the database, etc.

|

|

Layer 2 ( Framework): Frameworks like Robot or Cucumber allow teams to write code that focuses on the business problem being tested, versus the specific user interface (UI) technology. In some cases. this enables the same test to be reused across different web browsers, mobile apps, etc

|

|

Layer 3 (Driver): The driver is the lowest-level component. It knows how to interact with the application’s specific UI. For example, Selenium WebDriver has different drivers that know how to manipulate Chrome, Firefox, and Microsoft Edge, etc.

|

|

Layer 4 (Application): This is the actual UI technology being tested, such as a web browser, native iOS or Windows desktop application.

|

A four-layer test automation framework like this is useful in helping an agile team streamline its testing activities and create blocks of code that can be reused for future tests. Selecting, implementing, and updating a test automation framework, however, needs to be done with the same care and thought that goes into writing production code. While test automation frameworks can dramatically cut test suite maintenance costs and improve productivity on Continuous Delivery DevOps projects, their proper implementation still takes time, skill and a great deal of experimentation.

Challenge: Snowflake Servers

"Snowflake servers" are long-running production servers that have been repeatedly reconfigured and modified over time with operating system upgrades, kernel patches, and/or system updates to fix security vulnerabilities. This leads to a server that is unique and distinct, like a snowflake, which requires manual configuration above and beyond that covered by automated deployment scripts.

A fully-automated, script-driven Continuous Delivery pipeline works best when the elements that make up the pipeline are uniform. Automated configuration tools such as Puppet or Chef allow you to avoid snowflake servers by providing definition files to describe the configuration of the elements of a server. Using definition files (called manifests in Puppet, or recipes in Chef) is a key best practice in Infrastructure as Code, which allows environments (machines, network devices, operating systems, middleware, etc. ) to be configured and specified in a fully automatable format rather than by physical hardware configuration or the use of interactive configuration tools.

Infrastructure as Code (IaC) has become increasingly widespread with the adoption of cloud computing and Infrastructure as a Service (IaaS), which relies on virtual machines and other resources that cloud-providers like Amazon Web Services and Microsoft Azure supply on-demand from their large pools of equipment installed in data centers. IaC lets DevOps teams automatically manage and provision IaaS resources using high-level programming languages. In practice, this means developers have the ability to control most or all IaaS resources via API calls, in order to do things like start a server, load balance, or start a Hadoop cluster. Many DevOps shops also let developers write or modify an IaC definition file to provision and deploy new applications instead of relying on operators or system administrators to manage the operational aspect of their DevOps environment.

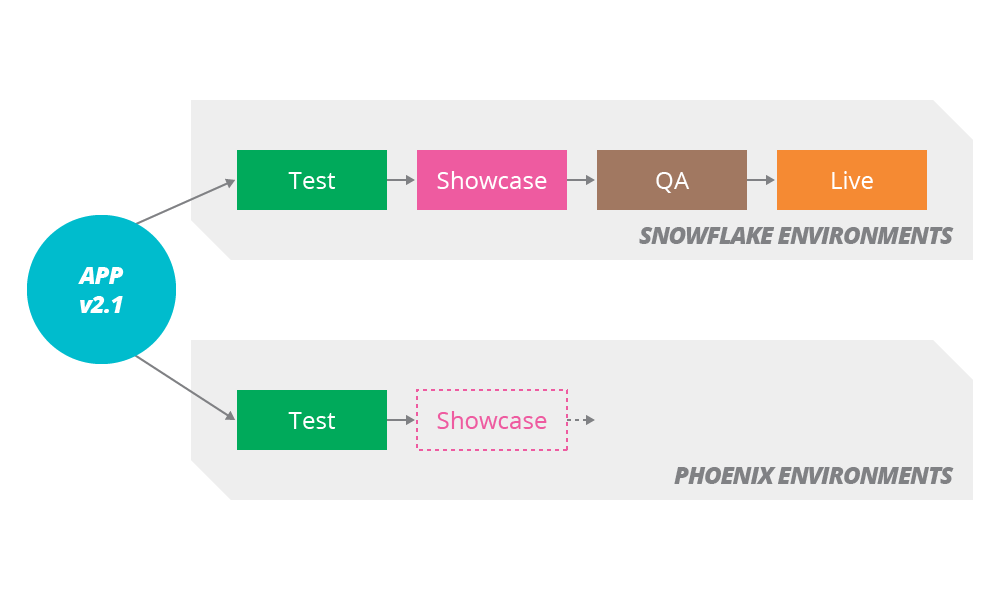

Snowflake Servers

Image Source: ThoughtWorks

Another advantage of defining your server operating environments with a tool like Chef or Puppet is that your configuration code can then be managed from within a version control system such as Git, which makes it easier to make environmental changes and rolled back those changes if need be. Version control also provides an audit trail to all changes in your server environments, which can come in handy in regulated industries.

The use of phoenix servers is another way to avoid snowflake servers and ad hoc, unrecorded changes to server configurations. Phoenix servers are virtual servers that can be created from scratch by rebuilding them at any time, like the mythical phoenix bird that regularly arises from the ashes of its predecessor. With phoenix servers, any changes in application code, no matter how small they might be, leads to redeployment of the complete application stack. This means creating a new server each time a new build is triggered. The new server will be created from a base image, which is created every time there are changes in the servers’ configuration. By doing this, there is no need to apply configuration changes after the server is created.

A good example of phoenix servers in production is the server environment at Netflix, which uses a 'chaos monkey' tool that randomly disables production instances to make sure Netflix can survive this kind of failure without any customer impact. Chaos Monkey is one of an entire simian zoo of tools (Latency Monkey, Conformity Monkey, Doctor Monkey, Janitor Monkey, Security Monkey) designed to keep the company's cloud safe, secure, and highly available. Chaos Gorilla is similar to Chaos Monkey, but simulates an outage of an entire Amazon availability zone, to verify that Netflix services can automatically re-balance to the functioning availability zones without manual intervention and impact that's visible to the end-user.

A Continuous Delivery pipeline is all about getting high-quality software into production, or into the hands of users, as quickly as possible. By automating as many manual tests in your pipeline as possible and using the right tools together with an automation framework to streamline your deployment architecture, it's relatively straightforward to make software deployments into low-key, low-risk events that can be performed at any time, on demand. You should also consider using version control and other agile development and testing practices that you're already using for application development to automate how you configure servers and provision your infrastructure. Like Netflix, you'll then be able to continuously test how fault-tolerant and reliable your Continuous Delivery pipeline actually is.

Related Articles: